|

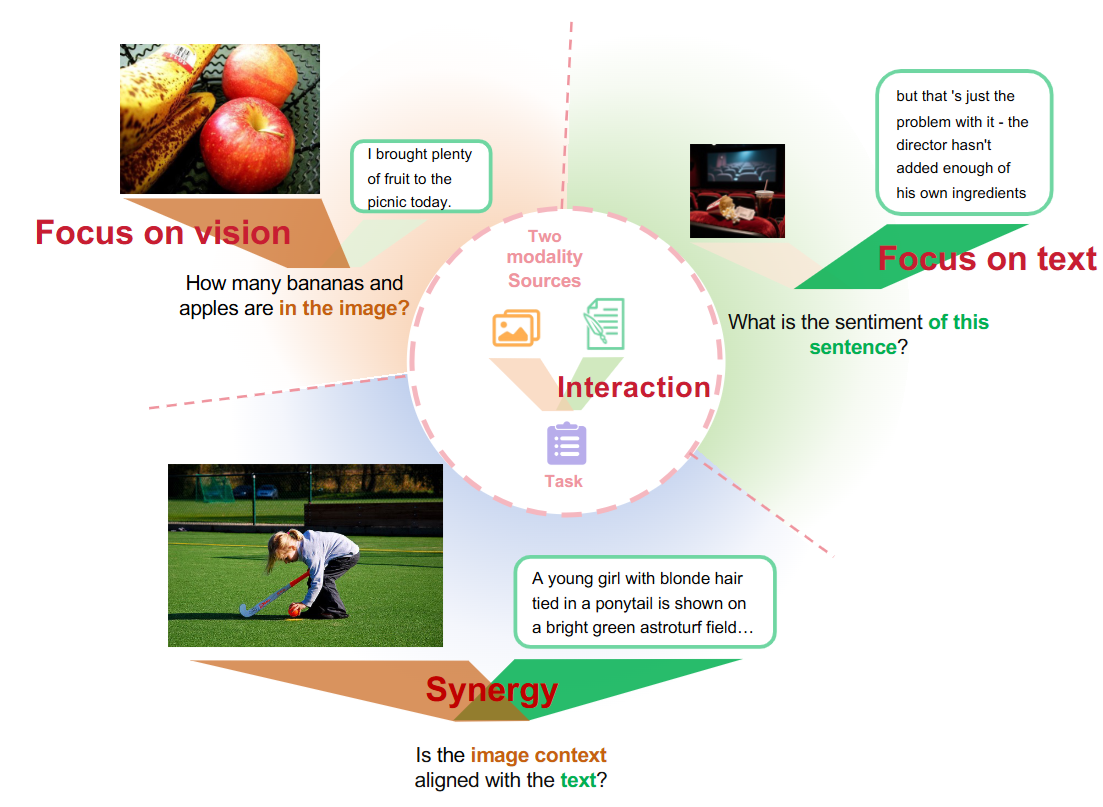

I am a fourth-year Ph.D. student at the Gaoling School of Artificial Intelligence, Renmin University of China, advised by Prof. Di Hu and co-advised by Prof. Feiping Nie. My research interests center on the mechanisms of multimodal learning and multimodal interaction, especially understanding and quantifying complex interactions (synergy, redundancy, and uniqueness) from an information-theoretic perspective. Recently, I focus on vision-language collaboration mechanisms and pretraining strategies in multimodal large language models (MLLMs). I received my Bachelor's degree in Automation from Beihang University in 2022. |

|

|

[2026-03] One paper accepted by CVPR 2026, thanks to all co-authors! [2025-05] One paper accepted by ICML 2025, thanks to all co-authors! [2025-03] One paper accepted by CVPR 2025, thanks to all co-authors! [2024-01] One paper accepted by ICLR 2024, thanks to all co-authors! [2023-11] One paper accepted by Pattern Recognition, thanks to all co-authors! |

|

Reviewer: ICLR 2024-2026, ICML 2024-2026, CVPR 2024-2026, AAAI 2024-2025, NeurIPS 2025, IJCAI 2025 |

|

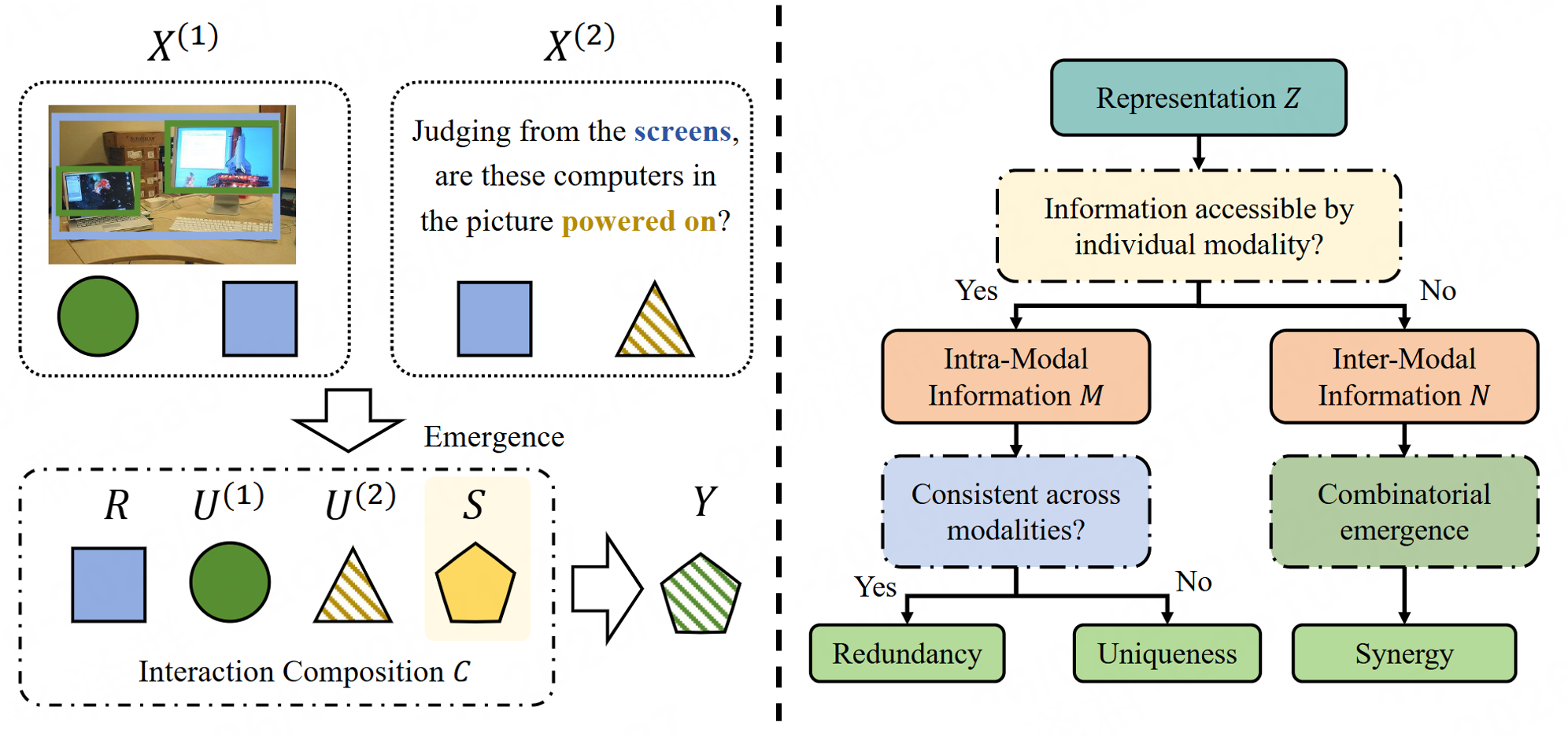

Zequn Yang, Yake Wei, Haotian Ni, Zhihao Xu, Di Hu CVPR 2026 Information-theoretic multimodal interaction decomposition |

|

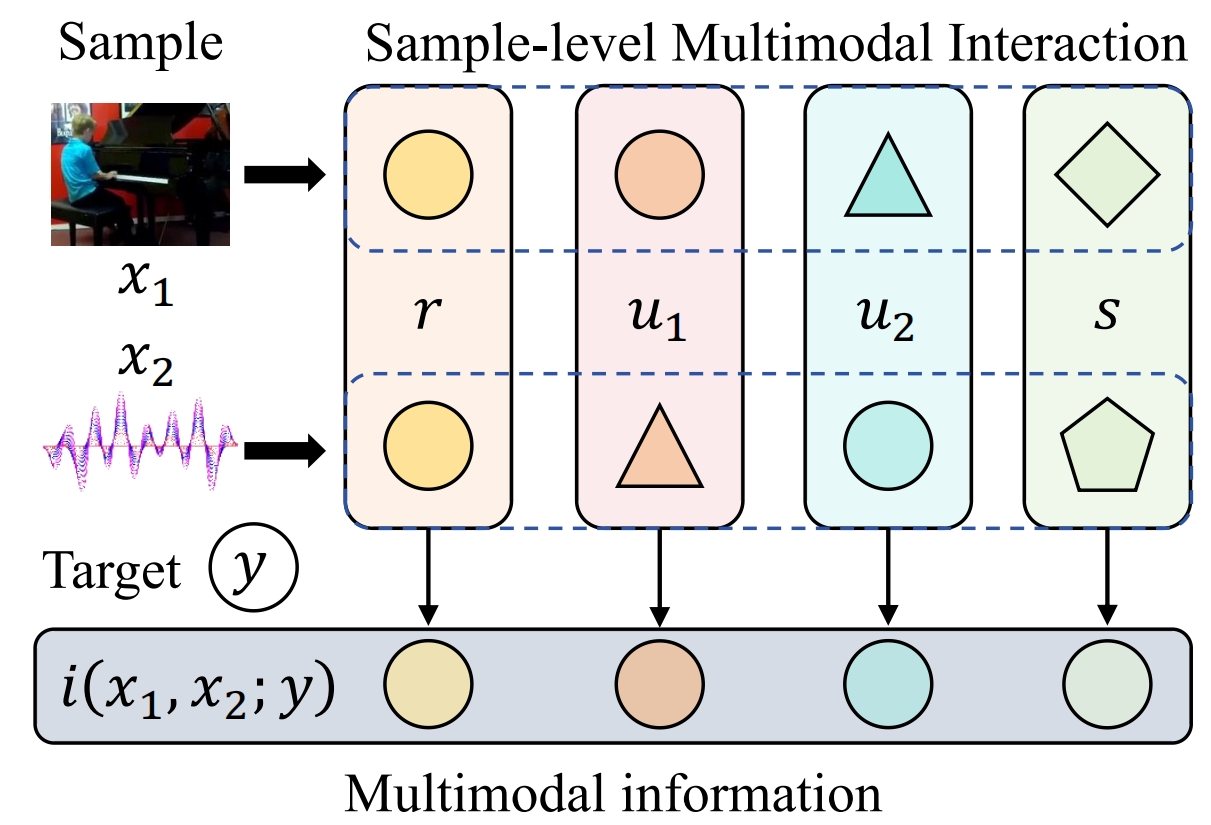

Zequn Yang, Hongfa Wang, Di Hu ICML 2025 arXiv / code Multimodal interaction quantification |

|

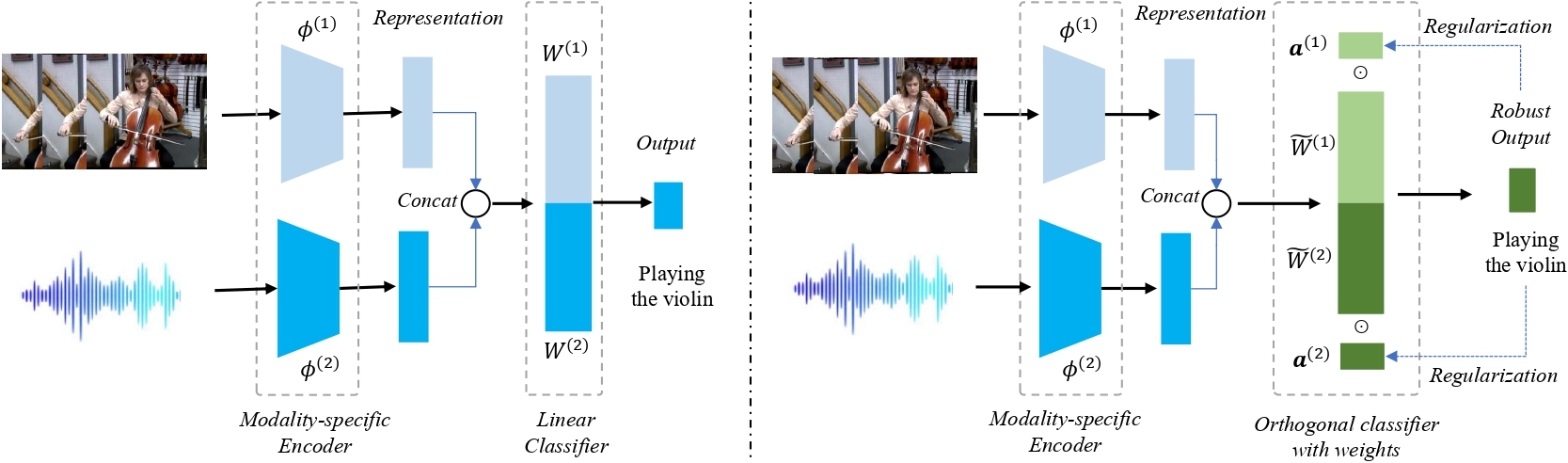

Zequn Yang, Yake Wei, Ce Liang, Di Hu ICLR 2024 arXiv /code Multi-modal Robustness |

|

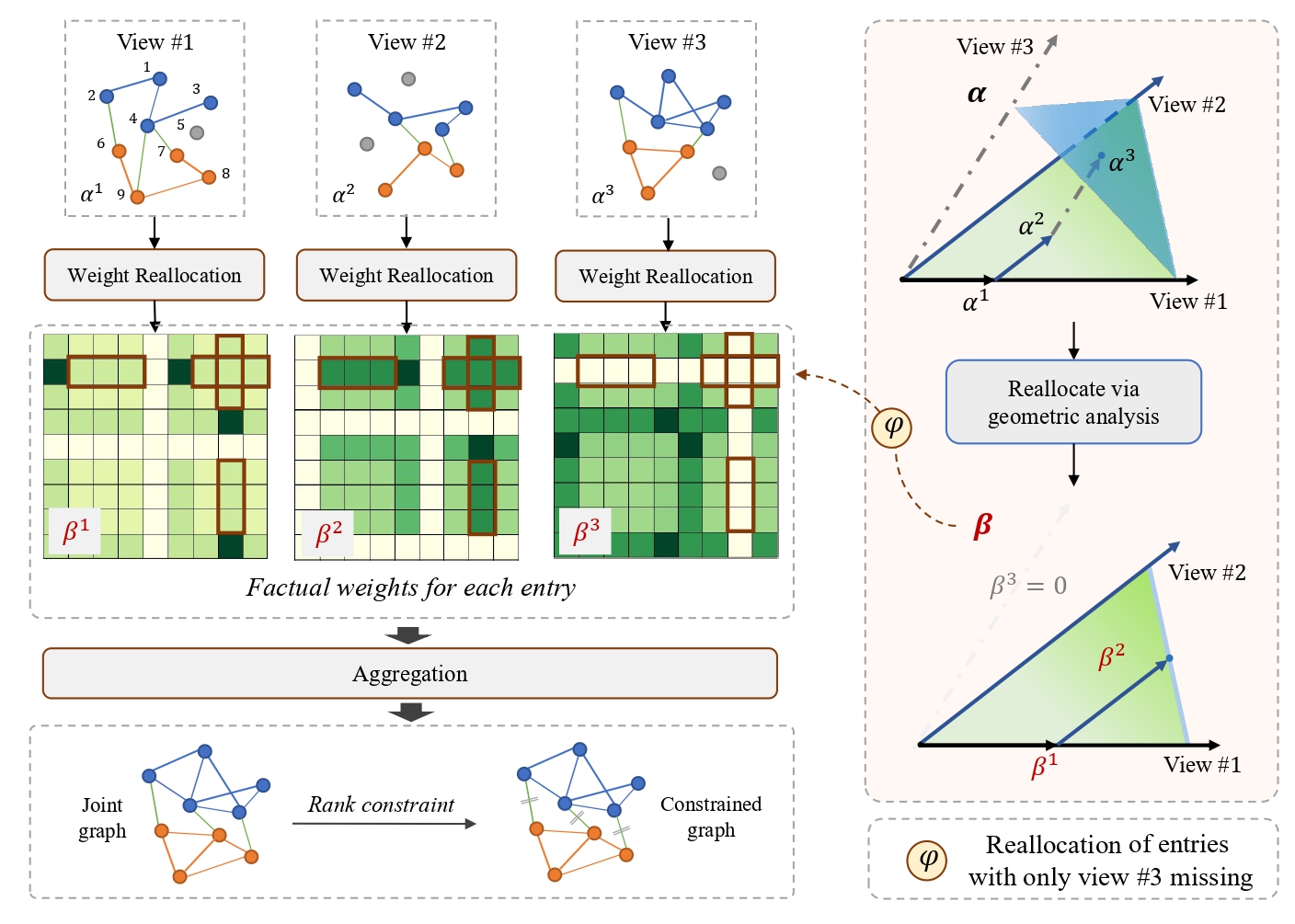

Zequn Yang, Han Zhang, Yake Wei, Zheng Wang, Feiping Nie, Di Hu Pattern Recognition paper / code Incomplete multi-view clustering |

|

Yu Miao*, Zequn Yang*, Yake Wei, Ziheng Chen, Haotian Ni, Haodong Duan, Kai Chen, Di Hu (* equal contribution) arXiv preprint, 2026 arXiv Multimodal interaction benchmark for large multimodal models |

|

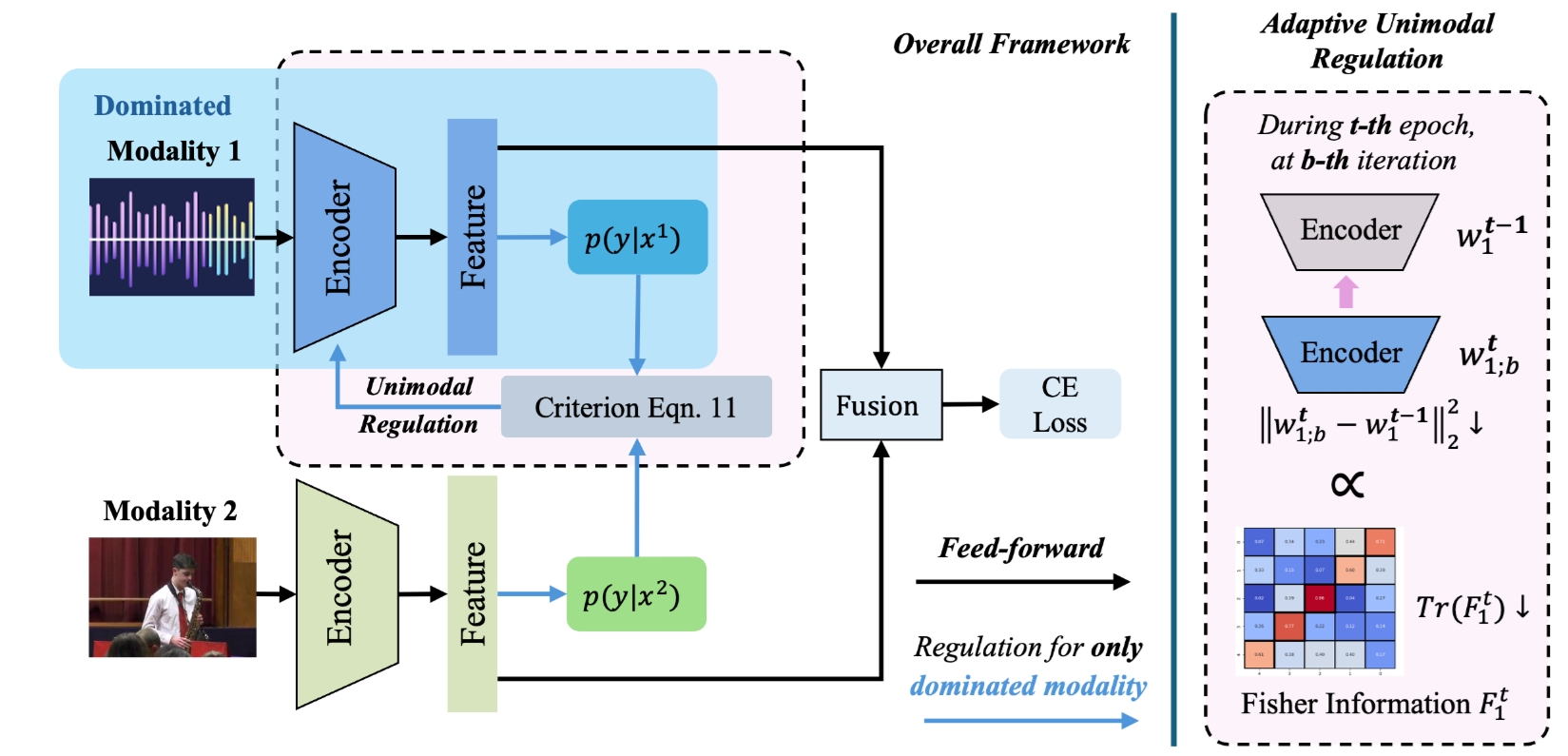

Chengxiang Huang, Yake Wei, Zequn Yang, Di Hu CVPR 2025 arXiv / code Adaptive Multimodal Learning for Balanced Information Acquisition |

|

Updated at Mar. 2026

Thanks Jon Barron and Yake Wei for this elaborate template.

|